The LLM line

Over the past few weeks, I’ve been experimenting with AI-assisted writing.

Yep; the same person who’s come down heavy-handed on internal blog posts that reek of stale, clinical prose; the one who calls out the eerie patterns that decouple effort and ownership; the one proud of the way they’ve cultivated their writing over time. You got me.

Dave… but… but why?

Despite my championing efforts, AI isn’t escaping the writing in the workplace. People reach for the AI-shaped writing tool because it’s often capable of stringing together enough convincing words that it does the job, maybe even better than they’d be capable of themselves. And, yes, at times, this is welcomed! I’d much rather receive a properly-communicated paragraph with clarity and intent over something fuzzy and half baked.

But creative writing? Storytelling? Musings, journaling, blogging, reflections? Is AI still the right tool for such a use case?

I regret to share the results.

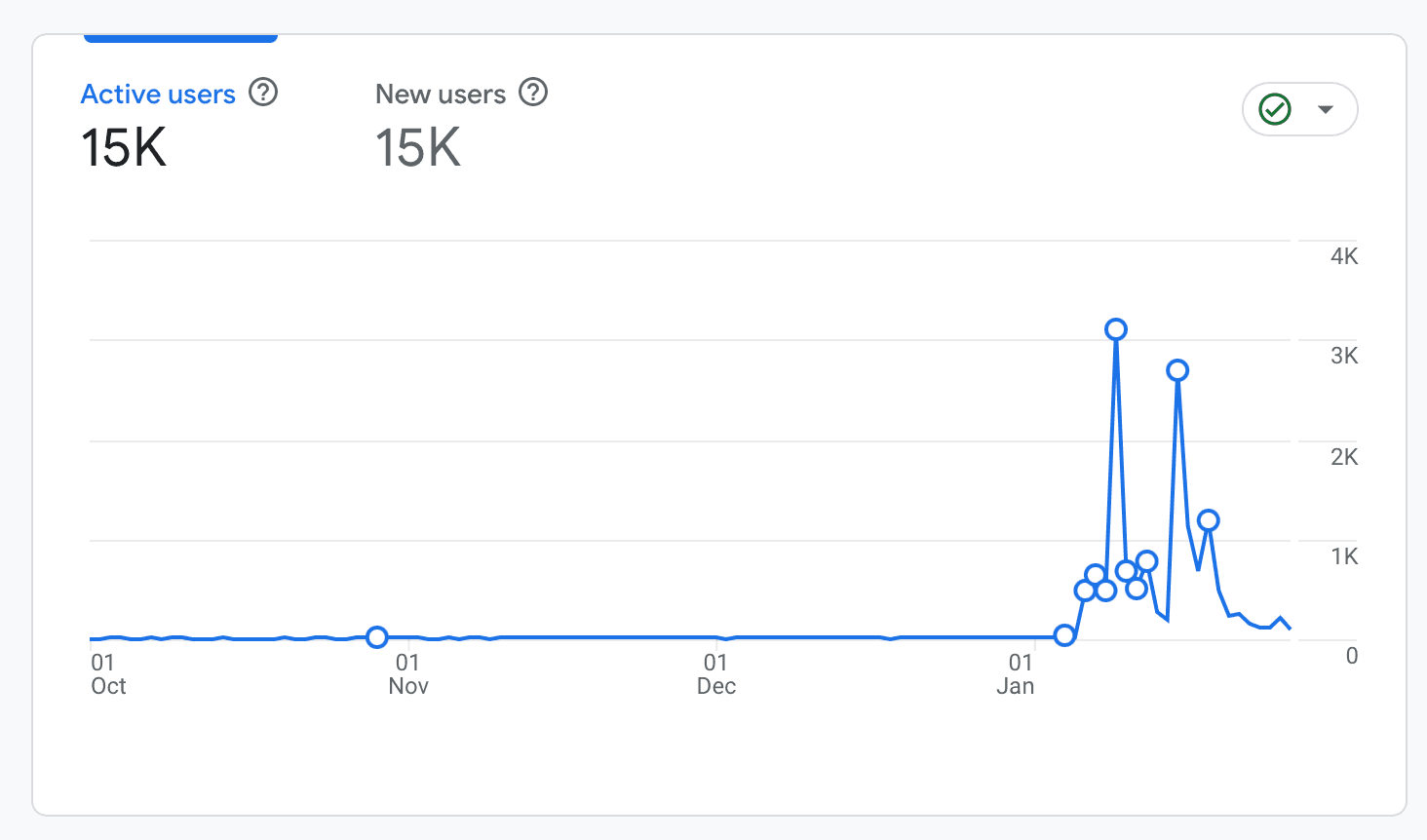

In the past 30 days, I’ve received more traffic and links than in the previous 365.

Yes, part of this is consistency. In January, I shipped at a much more regular cadence due to the frictionless process.

Yes, part of this is that agentic coding happens to be exploding right now, and writing about the topic is sure to catch some interest.

But could part of it also be that people just don’t necessarily care about the creative dilution? Is it better that the idea is communicated at all, rather than only having a fighting chance at life, duking it out with other priorities? Is this the easy way out? Is this me at all?

AI-assisted vs AI-derived

My process has looked like this: crack open voice dictation and spew what’s on my mind. 5-10 minute recordings. Sometimes pacing around the house, sometimes staring into the fireplace, sometimes catching myself on one of my infamous brain stalls (don’t worry, those don’t get transcribed.)

I end the diatribe asking Claude Opus 4.5 to make sense of what I just opined: ask me clarifying questions, allow me to fully form my stance, make my argument, show the value. We go back and forth; though I never allow it to fully take the wheel, it begins to know the route we are taking.

Once we’ve hit that point, when we’ve reached critical mass, I instruct Claude to create the markdown file to represent the post and place it in my blog project’s draft directory. I exit the whirlwind and let the thing cook. All that’s left as evidence of our time together is a single .mdx file generated by nondeterministic binary decisions.

I lightly edit the article, removing un-Daveisms (which is still mostly different than LLMisms - yes, I’m aware of the rehumanizing skills, but I wanna sound like me, not like not-LLM). As I scan the output, I already know in my gut the truth: this thing understood and represented me correctly.

It’s as if I spoke to a journalist. The story is based off of my words. But they’re sometimes reframed, slightly shuffled, certainly more grammatically correct, often with a tinge less color. But overall, it looks a lot like something I’d publish if I had 10x the amount of free time that I currently do. I commit, I push, done. Next idea.

Did I write the blog post? I don’t know. No? Kind of? “Yes” doesn’t truly feel right to claim. No, but I made it happen?

Friction

Suspected ADHD has been a real blocker in my shipping cadence, prioritization management, uh… hell, everything, over my entire lifetime. I have to admit, the frictionless publishing has been viscerally liberating.

My brain can race a mile a minute throughout the day, but I only seem to capture and quantify a tiny fraction of that experience. Throw in, you know, actual life things, a job, kids, hobbies, fitness etc. and blog posts are one of the first-and-many’s to drop off the priority list.

However, even with my dictation process, publishing an AI-assisted article to my blog feels like a shallow farce. It’s as if I were a pursuant musician and had the whole concept for a song arranged in my head, but hired a professional band to perform it on my behalf. Something rooted in authenticity becomes etherally lost in the inbetween. The soul is filtered, tumbled, slipping into the cracks in the sidewalk.

Dude, give me the fuckin’ guitar. That’s my song to play, even if I’ll play it technically worse than you will. That is, of course, if I can find the time, energy, and wherewithal to pick it up in the first place.

Effectiveness

The truth is, these tools are 90%+ effective at producing better results than most of us are capable of. That’s not a dunk; we are at an output disadvantage. We need food, sleep, mental health resets, vacations, love. That’s, of course, unique to human. And that’s also something to be embraced.

What’s fascinating are the perspectives and opinions of those who claim LLM output is complete trash. My wife shared this recent Garbage Day article with me, and while I really like the writing style, the argument is just not landing for me. It feel like the author isn’t a programmer. To expect that the LLM (remember, that’s Large Language Model) will have a precise, opinionated grip on output that adheres to your expectations without any sort of controlled, precise, opinionated input to guide it through that Large dataset is naive and misconstrued as LLM incapability.

There’s something happening in this landscape that very closely resembles Ira Glass’ described Gap. People have big ideas, a grand vision of a possible outcome, and see LLMs as the Mecca bus. But when the output suffers because they don’t truly hold the skill (yet) to guide it in the appropriate direction, well, yeah, of course it’s gonna be dogshit.

I read critiques like the one from Garbage Day and I recognize the frustration, but I also know what happens when you actually learn to steer the thing. The gap closes fast.

Everyone keeps saying we’re at a pivotal point in career decisions, education, how things will be made etc. Possibly that programming isn’t a career worth pursuing anymore. Right now, I feel like that couldn’t be further from the truth. Use these tools and those who have been around longer than them to learn what it means to program, cook, design. Only then will you be able to truly steer the tool, and it will begin to know the route. And only then will the dread truly start to settle in.

Crisis

The introduction of LLMs have uprooted entire lifetimes, timelines, identities; myself included. People are admitting to real loss of identity. Companies that make this tech thrive are gutting their own teams. Engineers are calling for accountability.

In parallel, as if some kind of cruel hypocritical joke, we’re all lined up to pay for monthly access to spit out more code than ever humanly possible. And if you don’t, you’re at risk of being left behind entirely.

When are you to be okay with using these tools, and why is it only sometimes? When are you okay with the productivity gains they provide? Why is some output okay (ie. agentic code) and other output is shameful (ie. agentic writing)?

The repetitive rote answer I keep hearing is “use it as a tool to assist you, not replace you.” Okay, but what the fuck does that really mean? Autotune exists, is that really me? Transit exists, am I shameful if I hitch a ride instead of walking?

Where do you draw the LLM line?

Art vs. task

Are you only using LLMs for things that you need to get done? Requirements? If it’s coming from your soul, is it still a bad place to introduce the tool? Is a painter’s paintbrush just as innocent?

Communication vs. expression

Are you trying to formally convey something to help unblock or inform someone? Are you taking time to express how you truly feel, a perspective you’ve formed over days, weeks, years? Does the tooling belong in one place and not the other?

Job vs. personal

Are you only okay with using AI in your workplace so you don’t lose your job? How is this different than being a Severed employee?

Thinking vs. feeling

Are you only okay with LLMs if they help bring output rooted in logic to exist? Is emotional output meant to stay untouched by these tools? How does this change between people who classify themselves as thinkers over feelers?

Efficiency vs. precision

Are you only okay with LLMs if they make you more efficient? If something needs fine-grained detail, is an LLM no longer an appropriate tool? Why?

Contribution vs ownership

Are you only okay with LLMs if the output is something you don’t fully own? If the PR is going to a codebase under someone else’s IP? If the piece isn’t ending up in your own portfolio?

You are loved, even if you are worse

For someone who grew up on computers, watching this tech evolve has been a spectacle, and I can’t decide if it’s been more of a firework show or a train wreck. There are a lot of hard questions to ask ourselves as makers in this evolving era. Of ownership, pride, shortcuts, priorities, ethics, attention. Of skill, grit, determination, authenticity. Of what it means to be human in an agentic era. I don’t know the answers, but I want to remind you of this:

It’s okay to be human. Even if the output suffers. Even if the results are worse than they might’ve been using other approaches. Even if half-baked, unedited, imprecise. Perhaps the ultimate point of your output isn’t the artifact that gets published, committed, linked to in newsletters, shared on TwiX. Maybe it’s who you became after going through the process of making it. How you got better, grew as a thinker, unlocked something within. What you learned that you definitely will or will not do next time.

But it’s hard to ignore the efficiency. The effectiveness. The results. I’m not sure we won’t lose something essential in the trade.

This post was written by a human.